Ask five security vendors to explain the difference between a vulnerability assessment and a penetration test, and you’ll get five answers that all sound vaguely correct and none of which help you decide what to buy next quarter. This guide fixes that.

We’ll cover what each one actually is, what it isn’t, where the confusion comes from, and how to decide which you need — or more often, how to run both without paying twice for the same coverage.

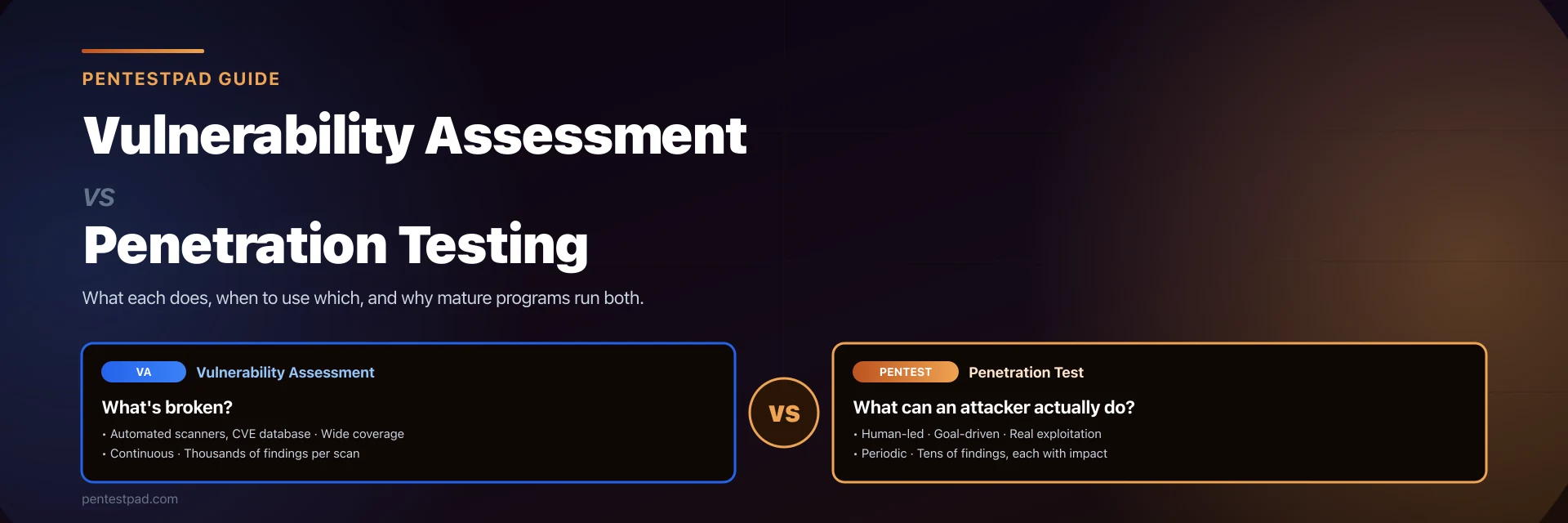

The One-Sentence Version

- A vulnerability assessment tells you what’s broken.

- A penetration test tells you what a motivated attacker would actually do about it.

Everything else is detail. The rest of this article is that detail, because in practice the difference decides budgets, compliance outcomes, and whether your next breach is embarrassing or career-ending.

What Is a Vulnerability Assessment?

A vulnerability assessment is a mostly-automated, breadth-first inventory of known weaknesses across a defined scope. A scanner (Nessus, Qualys, OpenVAS, Rapid7 InsightVM) fingerprints every host it can reach, matches the fingerprints against a CVE database, and produces a report — often thousands of lines long — ranked by CVSS severity.

What a vulnerability assessment is good at:

- Coverage. It will touch every IP in your range, every port, every service banner. Nothing hides by accident.

- Speed. A full scan of a /24 finishes in hours, not days.

- Repeatability. Run it every night, every week, every quarter — the methodology is identical each time, which makes trend data meaningful.

- Compliance paperwork. PCI DSS, HIPAA, SOC 2, and most insurance questionnaires explicitly require periodic vulnerability scanning.

What a vulnerability assessment is bad at:

- Context. It cannot tell you that CVE-2023-12345 is actually unreachable because of a WAF rule, or that the “medium” finding on a file server is critical because that file server holds your signing keys.

- Chains. Real breaches almost never exploit a single high-severity CVE. They chain a misconfiguration, a leaked token, and a trust relationship into full compromise. No scanner sees this.

- Business logic. If your pricing endpoint lets a logged-in user set

discount_percent=100, a scanner will happily report zero vulnerabilities on that endpoint. - Zero days and novel attacks. A scanner can only flag what’s already in its signature database.

Vulnerability assessments are what you run often, over a wide scope, to stay on top of the known-bad.

What Is a Penetration Test?

A penetration test is a human-led, depth-first simulation of a real attack against a defined target, usually with a defined objective (“get domain admin,” “extract customer data,” “reach the payment processor”). A tester — or small team — starts with the same scanner output you’d get from a vulnerability assessment, but then spends most of their time on everything a scanner can’t do: chaining weaknesses together, exploiting misconfigurations, abusing business logic, pivoting through trust relationships, and in the end, proving (or disproving) that a realistic attack path exists.

What a penetration test is good at:

- Proof of exploitability. Instead of “this port is open and might be risky,” you get “here is the shell we popped, here is the data we extracted, here are the screenshots.”

- Chaining. A good pentester will happily use five “low” findings to reach crown-jewel data, which is exactly how real attackers work.

- Business-logic flaws. Authorization bypasses, race conditions, insecure workflows — the whole category of vulnerabilities that exist above the CVE layer.

- Prioritization that actually helps. After a pentest you know which two findings matter most, not “fix these 4,372 mediums.”

What a penetration test is bad at:

- Coverage. A tester physically cannot look at every asset in your estate. Scope has to be chosen.

- Frequency. A thorough pentest takes a week or more of senior engineering time. You’re not running one weekly.

- Cost per finding. On a pure “bugs-per-dollar” basis, a vulnerability assessment wins every time. That’s not the right metric, but it is the argument finance teams reach for.

- Compliance breadth. Some compliance frameworks want evidence of scanning across the whole estate, not depth on a slice of it.

Pentests are what you run periodically, over a narrow scope, to prove that real attackers can or cannot get what they want.

Side-by-Side Comparison

| Dimension | Vulnerability Assessment | Penetration Test |

|---|---|---|

| Primary question | What weaknesses exist? | What can an attacker actually do with them? |

| Driven by | Automated scanners + CVE databases | Human testers with a goal |

| Scope | Wide (every host, every service) | Narrow and deep (a defined target or objective) |

| Duration | Hours per scan | Days to weeks per engagement |

| Frequency | Continuous / weekly / monthly | Quarterly, annually, or after major changes |

| Exploitation | Detection only, no actual compromise | Real (controlled) exploitation of findings |

| Business logic flaws | Missed | A primary focus |

| Report length | Thousands of findings, mostly noise | Tens of findings, each with impact |

| Typical cost | Low (tooling subscription) | Higher (senior human time) |

| Compliance role | Routine “are we scanning” checkbox | Proof of security posture for high-trust audits |

Where the Confusion Comes From

Half the industry uses the term “penetration test” to describe what’s really a vulnerability scan with a PDF glued on the front. You know the deliverable: 200 pages of Nessus output, no narrative, no exploitation, no prioritization. The tester ran a scanner, ran a second scanner to confirm, and charged for a week of work.

This happens for two reasons:

- Buyers can’t tell the difference at procurement time, so cheap vendors win the RFP.

- Compliance frameworks are sometimes vague — a checkbox that says “annual penetration test” doesn’t define what a real one looks like.

A real penetration test has:

- A stated objective beyond “run the scanner”

- Manual validation of at least the high-severity findings

- Exploitation chains, not just individual CVE lists

- A narrative that walks the reader from foothold to impact

- Remediation guidance specific to your environment, not copy-pasted from a CVE database

If your last pentest report didn’t have those things, it was a vulnerability assessment with extra margin.

When to Use Which

Run a vulnerability assessment when you need to:

- Satisfy a compliance requirement for periodic scanning (PCI DSS, HIPAA, SOC 2)

- Keep an ongoing baseline of known CVEs across your estate

- Track remediation progress week-over-week

- Flag newly exposed assets after cloud provisioning

- Feed data into a risk scoring model

Run a penetration test when you need to:

- Prove to a customer, board, or regulator that your controls actually hold against a motivated attacker

- Validate a new product before launch, a new architecture before rollout, or a new M&A integration before go-live

- Investigate an incident and understand what an attacker could have done

- Go beyond CVE chasing and find the business-logic and chained-privilege issues that scanners miss

- Test people and process (red team), not just systems

Run both, in sequence, when you need to:

- Meet serious compliance frameworks (ISO 27001, FedRAMP, NIS2) that expect continuous scanning and periodic testing

- Mature a security program beyond “we ran a scanner, we’re good”

- Get real, defensible answers to “how secure are we?” from executive leadership

Why Mature Programs Always Run Both

The argument between VA and pentest is a false binary. Every mature security program runs vulnerability assessments continuously and penetration tests periodically, and the two feed each other:

- The scanner tells the pentester where to start and what’s already known, so the pentest isn’t wasted on finding things a tool could have found.

- The pentester tells the scanner operator which findings actually matter, so the 4,000-line scanner output becomes a 50-line remediation plan.

- The patch cycle kicks off from scanner data; the architecture decisions kick off from pentest data.

- Compliance gets satisfied by both; actual security gets improved mostly by the pentest.

The cost argument disappears as soon as you think about it correctly: a vulnerability assessment is infrastructure, priced like a subscription. A pentest is a project, priced like a consulting engagement. They are not substitutes. They are different products that solve different problems, and mature programs budget for both.

How PentestPad Fits In

Most teams run their vulnerability scans in one tool, their pentests in another, and their reporting in a third — which means findings get re-typed, context gets lost, and the deliverable arrives weeks after the work is done. PentestPad collapses that last step.

- Import scanner findings (Nessus, Burp, Nuclei, custom feeds) directly into a project

- Layer manual pentest findings on top, with full evidence, CVSS scoring, and remediation

- Generate a client-ready report in DOCX or PDF in minutes, not days, via our pentest report generator

- Hand the client a portal where they can track remediation, request retests, and close findings as they go

The platform doesn’t replace your scanner, and it doesn’t replace your tester — it replaces the weeks of Word-editing at the end of every engagement.

Frequently Asked Questions

Is VAPT the same as a penetration test?

No. VAPT stands for “Vulnerability Assessment and Penetration Testing.” It’s an umbrella term for running both activities together — typically a vulnerability scan followed by a human-led penetration test on the same scope. A pentest on its own is one half of VAPT.

Can a vulnerability assessment replace a pentest?

No. A vulnerability assessment finds known weaknesses; it does not validate whether they are exploitable, chain them into an attack path, or catch business-logic issues. For a regulated industry or a product launch, a scan alone is not enough.

Can a pentest replace a vulnerability assessment?

Also no. A pentest is a point-in-time snapshot over a narrow scope. You still need continuous scanning to catch the CVE that landed in your environment the week after the pentest ended.

How often should we run each one?

Vulnerability scans should run at least weekly — continuously if you can. Penetration tests should run at least annually, plus before any major release, architecture change, or compliance audit. Organizations under active regulatory scrutiny often run pentests quarterly.

What about automated or AI-driven pentests?

Automated pentest platforms (including PentestPad’s own CLI-based AI pentest agent) are a powerful middle ground: faster and cheaper than a full human engagement, deeper than a vulnerability scanner, and able to run more often than a traditional pentest. They don’t replace a senior human tester for a high-stakes engagement, but they dramatically extend how often you can validate your controls between formal engagements.

Do compliance frameworks care which we run?

Yes, and differently. PCI DSS, HIPAA, and SOC 2 explicitly require regular vulnerability scanning. ISO 27001, FedRAMP, and most enterprise procurement processes also expect periodic penetration testing. NIS2 and DORA, the newer EU frameworks, are increasingly prescriptive about both. If you’re selling into regulated industries, you should assume both are required.

What does a good pentest deliverable look like?

A good deliverable is narrative-driven: it explains the attack path from foothold to impact, prioritizes findings by business risk (not just CVSS), includes reproducible proof-of-concept steps, and hands remediation owners clear, specific guidance. If you’re curious what that looks like in practice, our penetration test report template is a reasonable baseline.

Author

Date

15. April, 2026

Tags

vulnerability assessment, penetration testing, vapt, vulnerability scanning, pentest, security testing, compliance